Educational institution integrates High Availability Cluster with Open-E DSS V7

The Duale Hochschule Baden-Württemberg Mosbach has been a customer of EUROstor since 2010 and has already bought several clusters from this manufacturer. As maintenance of such big educational site involves data services for proximately 230 employees and 3600 students, they required a centralized, high-availability storage system that provides iSCSI capacity for VMware servers.

As for High availability, they were looking for a solution with separated storage cluster nodes, plus the system should be easily expandable and the initial configuration should be of 10 TB on high-performance hard disks. Additionally, the system should offer good performance for a good price, as well as high redundancy with replicated data.

Solution

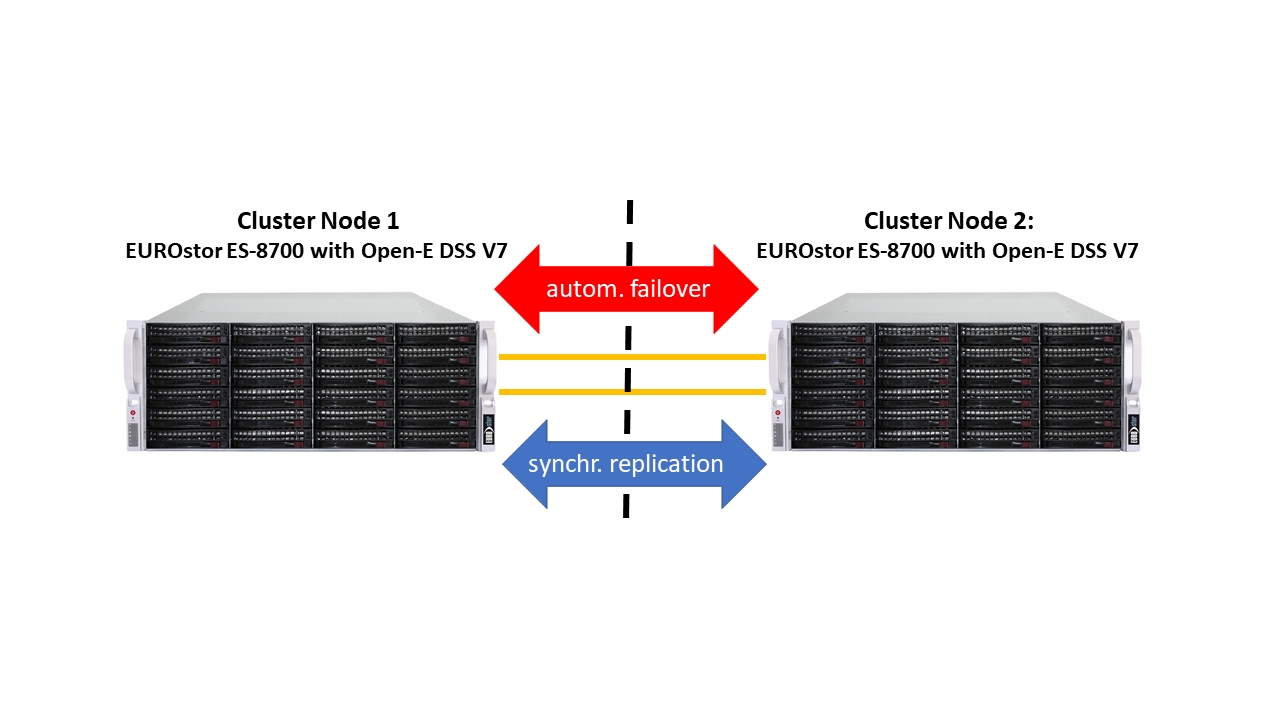

EUROstor has offered Duale Hochschule Baden-Württemberg Mosbach an ES-8700 cluster of two servers, with high-performance Areca RAID controllers and 10K hard disks. The Open-E DSS V7 storage software installed on it enabled synchronous data replication via a dual 10 Gbit Ethernet line to perform an automatic failover in case one of the nodes becomes unavailable. The system has been designed to be easily expandable so as to adjust to the rapidly growing amount of critical data.

Thanks to this, 36-bay systems will be populated with 12 disks for the beginning which makes the expansion on the fly easy and uncomplicated.

| Configuration per Cluster node | |

|---|---|

| Server | 36-bay servers |

| Processor | Intel® E-1650 v4 processor |

| RAM | 32GB DDR4 RAM |

| Ethernet | 2x 1GbE Ehternet RJ45 onboard 2x DUAL Port 10GbE Ethernet SFP+ card |

| HDD | 12x 1200GB SAS 10K HDDs per System |

| RAID | ARECA 1883 12Gbit RAID Controller incl. Cache protection by SuperCap |

| Software | Open-E DSS v7 License (Unlimited) |

Customer feedback

Herrmann Römer, Head of IT Services

„We have chosen Open-E DSS V7 mainly because of our requirements for data replication combined with automatic failover for maximum availability of all data which in our case is a must. This software combined with great EUROstor solution is a guarantee that our IT environment has everything that’s needed.”